improved-purple

If you are familiar with my llm 2025

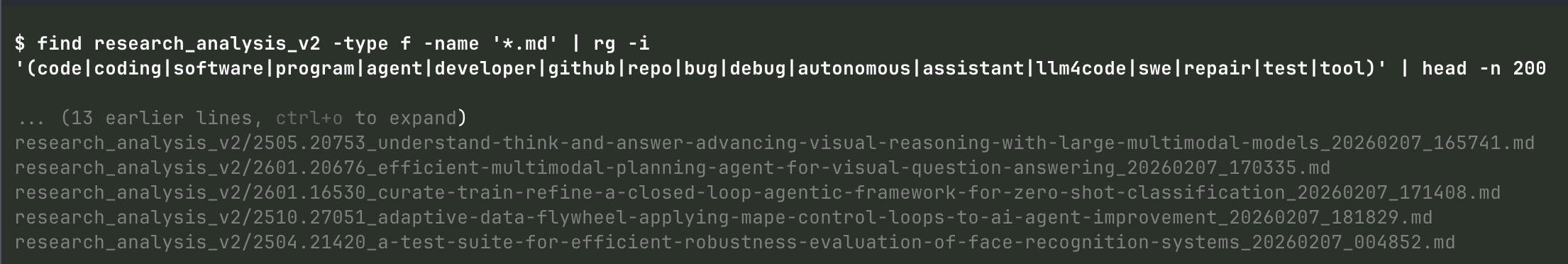

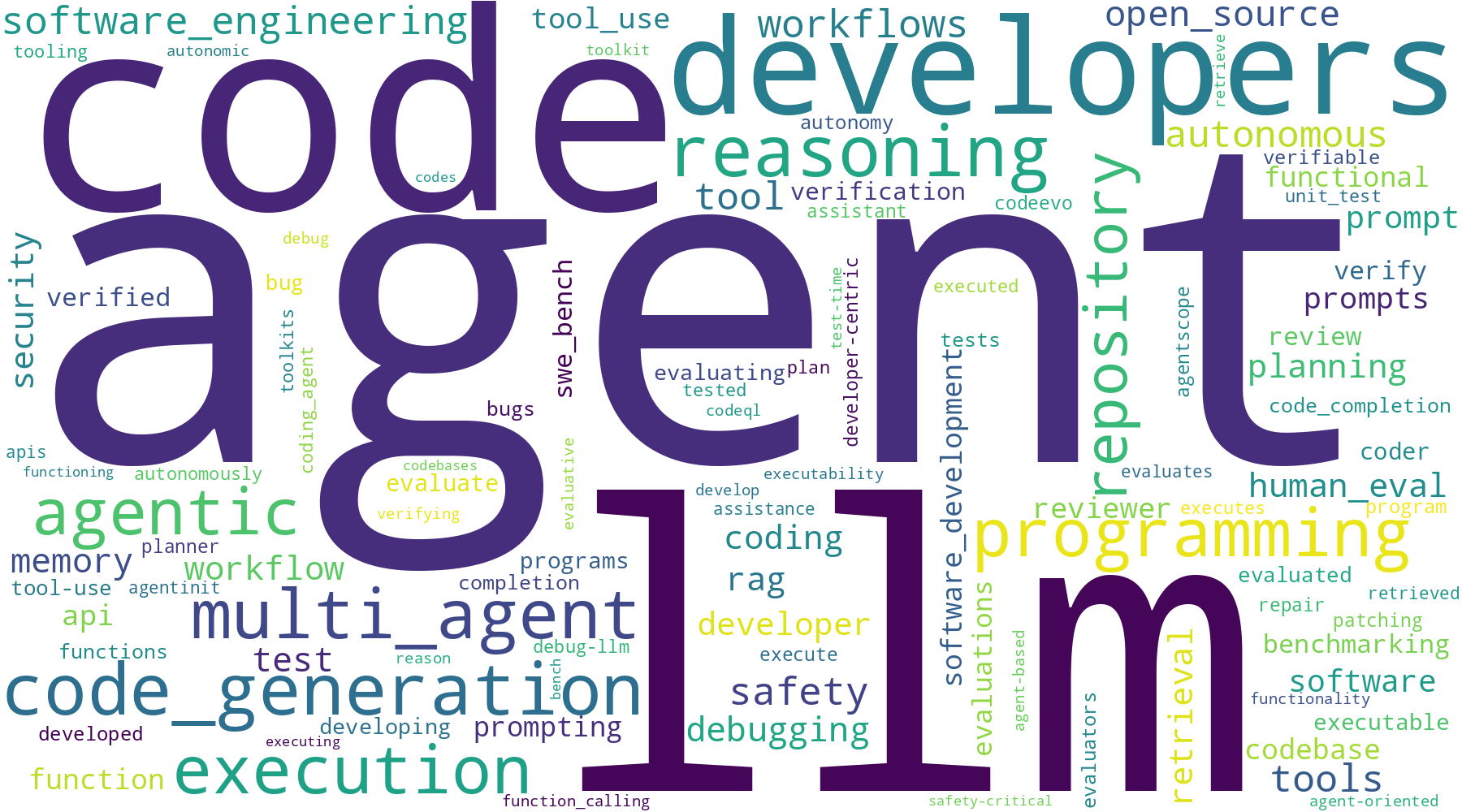

If you are familiar with my llm 2025 research digest, I am currently building a word cloud for terms related to "coding assistant", which I will feed back into the corpus to find the top X papers for llm coding (from 2025-now) to add to the summary/analysis corpus. The idea is to have a sort of research flywheel for SOTA practices.

I have learned some really interesting tidbits. Research applications are hard, of course. Probably the highest impact application is on the research pipeline, since I am working on that actively. One example is the judge. https://github.com/memgrafter/research-crawler-flatagents/blob/main/research_paper_analysis_v2/config/completeness_judge.yml

I learned 4 valuable findings about judges in research:

* judge reliability is not ideal, so you can't use it finely.

* judge is best used to gauge adherence to general layout/expectations not factual accuracy or detail quality, especially not quality. only throw out scores 0-2 of 10, for example, in my case I reduced it to pass/repair/fail. maybe that was dumb of me, but it seems right.

* weaker models still make good judges (and routers)

* don't try to repair/recast output, redo if you don't get one of your terms, things went wrong. (mdap/maker paper)

(Research pipeline is in shambles of course as all good research pipelines are, but the v2 is shaping up, and I will be able to extract some best practices from it.)

Regarding the word cloud, it's amazing what you can do without multi agent workflows. I didn't really expect codex-5.3(high) in pi to generate me this PNG file... what the hell? Anyways, I'll pass this back through the research search and try to go deep on programming research next.

I have learned some really interesting tidbits. Research applications are hard, of course. Probably the highest impact application is on the research pipeline, since I am working on that actively. One example is the judge. https://github.com/memgrafter/research-crawler-flatagents/blob/main/research_paper_analysis_v2/config/completeness_judge.yml

I learned 4 valuable findings about judges in research:

* judge reliability is not ideal, so you can't use it finely.

* judge is best used to gauge adherence to general layout/expectations not factual accuracy or detail quality, especially not quality. only throw out scores 0-2 of 10, for example, in my case I reduced it to pass/repair/fail. maybe that was dumb of me, but it seems right.

* weaker models still make good judges (and routers)

* don't try to repair/recast output, redo if you don't get one of your terms, things went wrong. (mdap/maker paper)

(Research pipeline is in shambles of course as all good research pipelines are, but the v2 is shaping up, and I will be able to extract some best practices from it.)

Regarding the word cloud, it's amazing what you can do without multi agent workflows. I didn't really expect codex-5.3(high) in pi to generate me this PNG file... what the hell? Anyways, I'll pass this back through the research search and try to go deep on programming research next.

top_terms.csv1.73KB