Langfuse "input" empty

Telemetry

I am using "@mastra/langfuse": "1.0.2" in combination with a Mastra agent using gpt-5.1 llm.

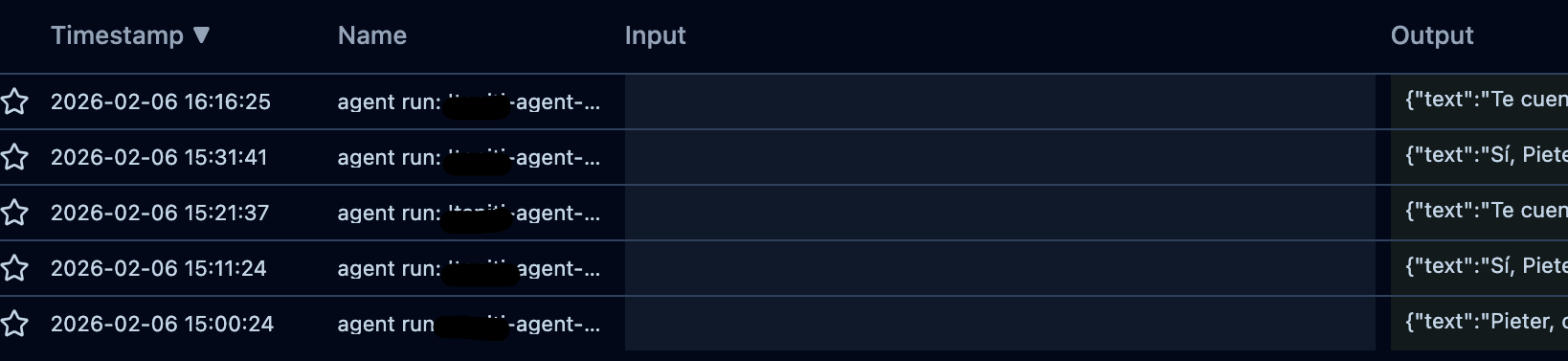

When I'm looking at traces in Langfuse, it does not show the input. I have to drill down to the llm trace to see the actual user input query. Also the output has the full object, not just the text.

Is there a way to configure the root observation to have the value Langfuse expects so it shows up correctly in the Langfuse dashboard?

When I'm looking at traces in Langfuse, it does not show the input. I have to drill down to the llm trace to see the actual user input query. Also the output has the full object, not just the text.

Is there a way to configure the root observation to have the value Langfuse expects so it shows up correctly in the Langfuse dashboard?