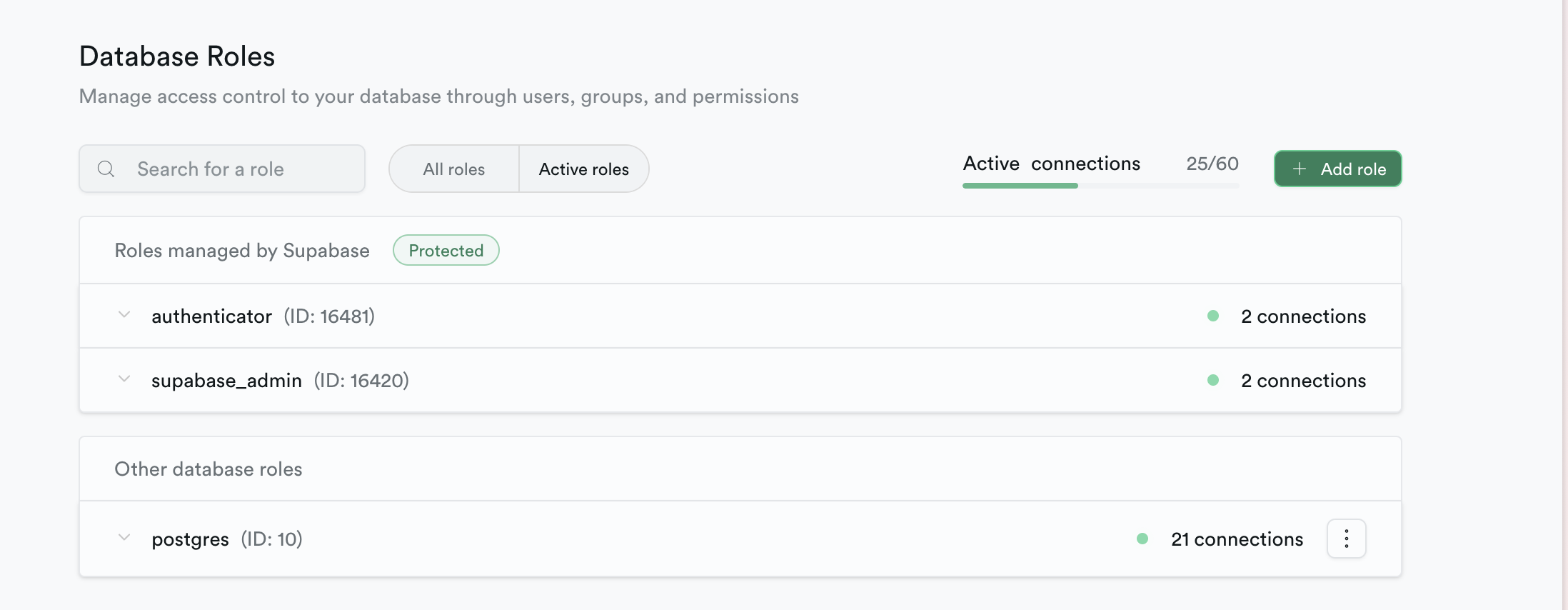

Getting "Max client connections reached" with only 25/60 connections

I am using supabase with transaction mode with prisma in a serverless Next.js application hosted via Vercel. My database URL is

As a test, I sent 1k requests to my database, and I got a "Max client connections reached" error. After I get this, every single API route returns the same error. Isn't the entire point of Supabase that it would handle cases like this? And how come it says I have 35 open connections still, yet says max clients reached?

postgres://postgres.<ref>:<password>@aws-0-us-east-1.pooler.supabase.com:6543/postgres?pgbouncer=true&connect_timeout=20&connection_limit=1&pool_timeout=20As a test, I sent 1k requests to my database, and I got a "Max client connections reached" error. After I get this, every single API route returns the same error. Isn't the entire point of Supabase that it would handle cases like this? And how come it says I have 35 open connections still, yet says max clients reached?