Handle PersistentVolume for multiple LAPI replicas in Kubernetes environment

Hello,

I deployed Crowdsec on our Kubernetes cluster and I want it to be High Available if one lapi pods comes to fall.

So in the helm values I set

lapi.replicas=3, and lapi.strategy.type=RollingUpdate.

But when 3 lapi pods are being created, one succeeds and the two other fail because they can't be attached to the same volume.

Indeed, i set lapi.persistentVolume.config=true and lapi.persistentVolume.data=true.

My 1st question is what are these both values used for?

My 2nd question is, if both values are really useful for my case, how to handle that each lapi replica has its own PersistentVolume?

Thanks in advance15 Replies

When using multiple replicas for LAPI, persistent volume are not supported, as this would imply ReadWriteMany, which is rarely supported and often a bad idea.

If you use an external database (ie, not sqlite), there's no need for the data PV, and the config PV is only useful to store the CAPI credentials, but it can be avoided if you set

lapi.storeCAPICredentialsInSecret to trueAlright what is stored in the data PV?

For LAPI:

- data PV: the sqlite database if using the default sqlite backend

- config PV: the various config file, but you can provide all/most of them from configmaps, so it's not really useful anymore

Alright thank you, and I use a postgresql-typed database: so I don't need to deploy the data PV, right?

yes

alright thank you

I had a last question that is independant to my previous question

How to disable the debug logs in the crowdsec agents in the helm values ?

I set env.LEVEL_DEBUG=false but debug logs are still generated

Can you show me an example of those debug logs ?

It could be either the global config of crowdsec or from a parser/scenario (especially if you have added a custom parser/scenario, the one from the hub do not have debug enabled by default)

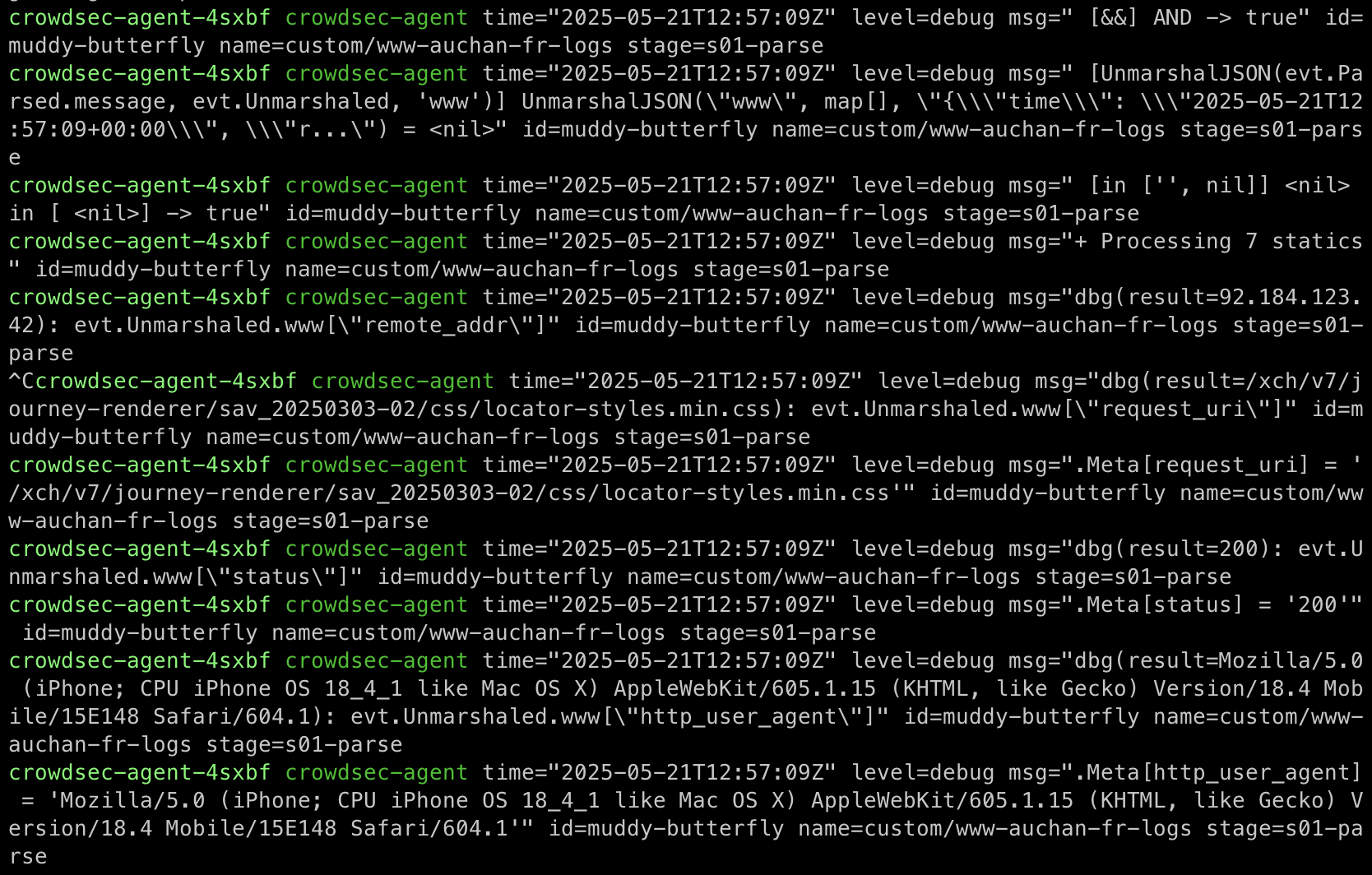

Here is an example of crowdsec agents level debug logs.

Indeed i created custom parsers and scenarios.

you probably have

debug: true in one of your custom parsersYeah ok I have to set them to false, sorry

thank you for your reply, you can close this ticket

@blotus this error could be due to a persistentvolume error ?

time="2025-05-26T15:44:24Z" level=fatal msg="crowdsec init: while initializing LAPIClient: authenticate watcher (crowdsec-agent-pbszp): API error: ent: machine not found"

could be if you were still using sqlite (but you mentionned using postgresql)

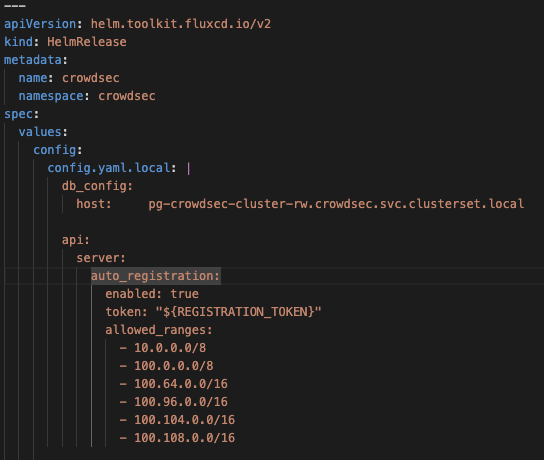

or it could also be that you modified the config.yaml directly in the PV, and the one passed from the config map does not have the PG config ?

possible, but I configured a postgresql database

You mean the crowdsec PV ? Because I disabled it

yeah

but you are passing the proper config from the config map so it should be ok

yes i see it

that's why idk why there is this error

is it possible that when the LAPI replicas restart, the registration token changes, and the current agents are not registered to the new LAPI replicas with the new registration token?

@blotus