How do I know when my pod has the GPU available again?

Do I just keep refreshing the page?

I moved away from a network volume because it slowed the speed of my ComfyUI generations, but now I can't pause pods because I'll lose my GPU...Is there a better way to have persistent storage while being able to leave and come back to GPU at max speed? I feel like i'm missing something here.

10 Replies

@Reese you can just terminate the pod, and then relaunch it, usually should be fine to have some sort of GPU

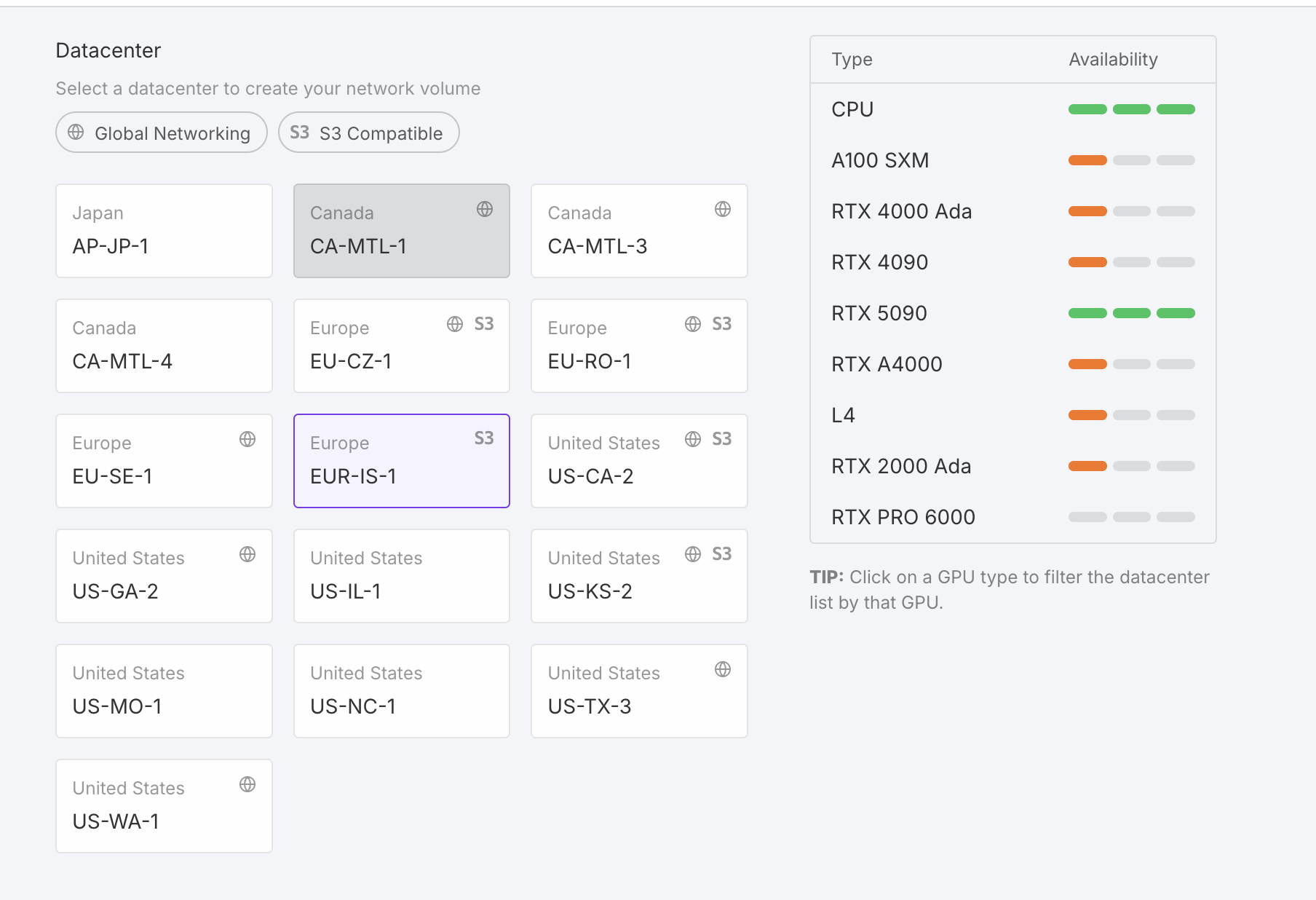

i see this region has network volume + high avaliability for 5090

But I would lose all my data. And I don't want to use network volumes because it kills my generation speed. See https://discord.com/channels/912829806415085598/1364178138048893019/1410316324042706945

what data do u have?

comfyui + models + etc

HM, im not too familiar with ur setup, but is it possible to have the models in the persistent storage /workspace, and then you just run a mv command to outside of /workspace, and comfyui loads it from there?

that way u don't need to go through downloading models again, but comfyui can load it from on the machine rather than a 'harddrive'

i think what you can do actually is you can mount your network volume instead of /workspace, if that is where your comfy ui expects it

do something like

/harddrive

and then just run a mv command into the /workspace (which now is on container)

if your template is always expecting /workspace as default

sorry tldr:

1. use the network volume as just a storage. You can set the default folder as something other than /workspace

2. By doing so the /workspace folder will still be on the machine rather than a "external network volume" which should still be fast, and you can just move things between /workspace <> /whatever_you_call_your_networkvolume_folder

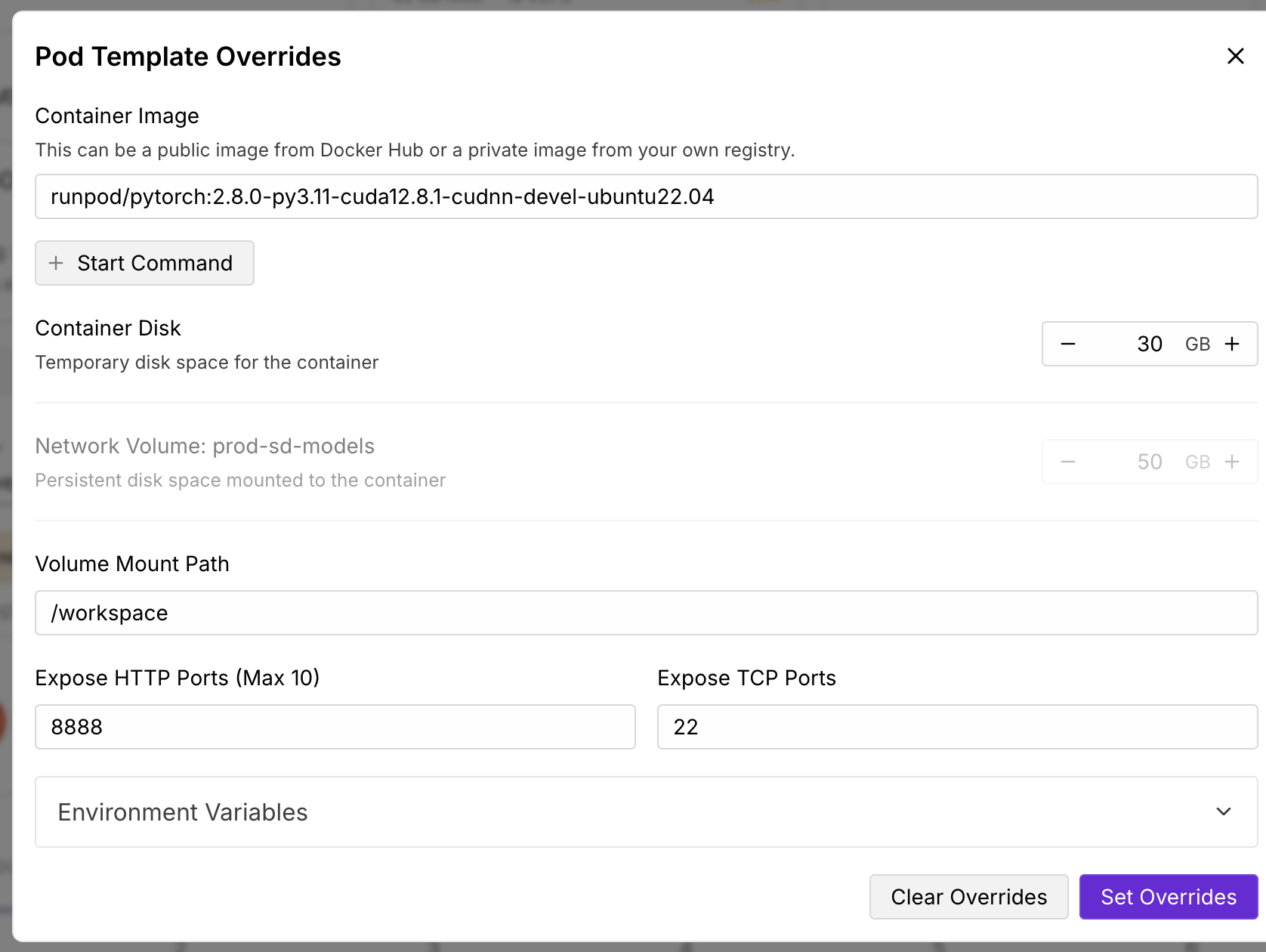

change this volume mount path

Hm hard to understand but I will try this out thank you

Sorry let me rephrase

So a network volume is basically an external harddrive you can plug into a pod. This is slow b/c harddrives are slow (lol). Can think of it that way for simplicity sake.

When you launch a pod / template, it usually is mounted under the /workspace folder.

This means that anything under /workspace is your harddrive, and anything outside of it is your "actual computer".

Anything in your "actual computer" is faster to load, b/c it is native to the computer vs an external machine. can think of it as an SSD vs a harddrive.

So what i'm saying here is that if your template, is loading models from

/workspace and that is what is causing it to be slow b/c it trying to load from an external harddrive.

Why not instead attach your hard-drive to a different volume mount path such as /harddrive or /workspace2, or whatever that folder name you want it to be, just edit the volume mount path as a new folder name.

And then when you launch comfy ui, it might not see any models at first. but all you need to do is run a terminal command such as;

cp from_folder to /workspace

so that way your /workspace is actually on the "actual computer" and should be faster to load onto comfy ui, and you can still have a persistent folder to save to for anything you want to keep around.

So what it looks like in practice is:

1. Launch a pod with a network volume under /harddrive

2. Your comfy ui launches with no model

3. run a command cp model_from_harddrive to /workspace

4. refresh? idk does comfy ui have a refresh button

And this should be faster hopefully

(just a guess 😅)So I'm using this template https://discord.com/channels/912829806415085598/1364178138048893019 (madiator2011/better-comfyui:slim-5090)

It says:

Directory Structure

/workspace/madapps/ComfyUI: Main ComfyUI installation

/workspace/madapps/comfyui_args.txt: Custom arguments file

workspace/madapps/filebrowser.db: FileBrowser database

You're saying deploy my network volume to a pod and mount it to /harddrive. What cp command would I do then? Since if I'm understanding you correctly you're saying that having my ComfyUI installation on the network volume will slow inference speed