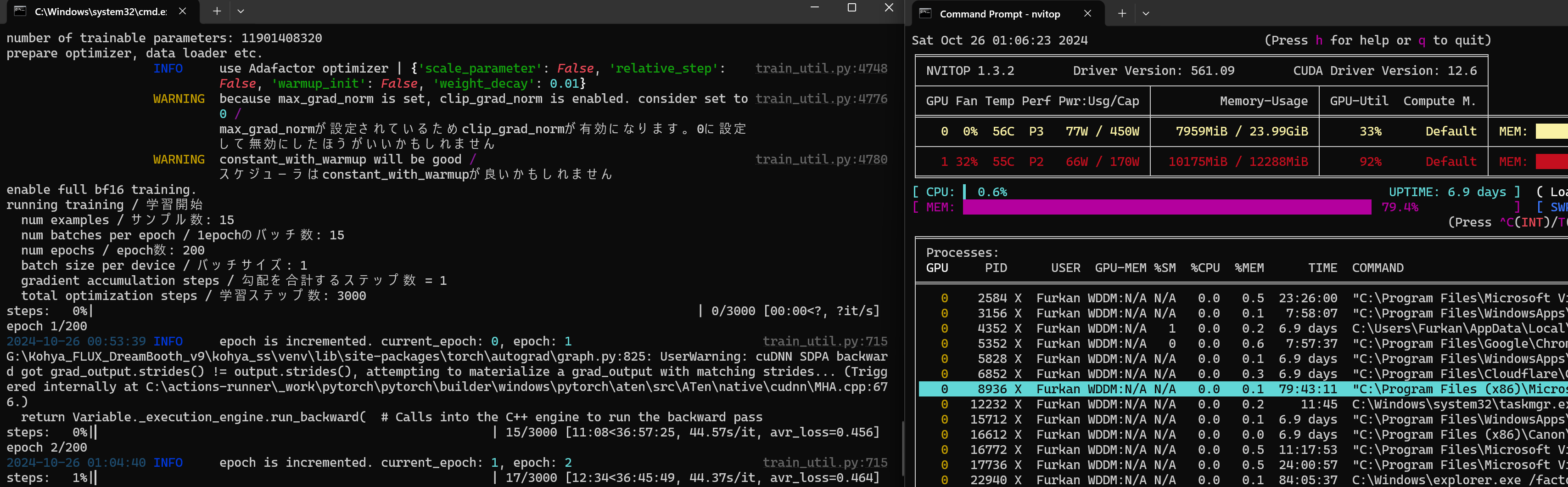

<@205854764540362752> Could you check v9 configs for 8GB again.. with Torch 2.5, i get out of memory

@Furkan Gözükara SECourses Could you check v9 configs for 8GB again.. with Torch 2.5, i get out of memory errors, With 2.4 - it works but very slow. It was working on an earlier version of your 8GB configs. File "E:\StabilityMatrix-RAID\Kohya_Flux\kohya_ss\sd-scripts\library\flux_models.py", line 830, in _forward

attn = attention(q, k, v, pe=pe, attn_mask=attn_mask)

File "E:\StabilityMatrix-RAID\Kohya_Flux\kohya_ss\sd-scripts\library\flux_models.py", line 449, in attention

x = torch.nn.functional.scaled_dot_product_attention(q, k, v, attn_mask=attn_mask)

torch.OutOfMemoryError: CUDA out of memory. Tried to allocate 1.90 GiB. GPU 0 has a total capacity of 8.00 GiB of which 0 bytes is free. Of the allocated memory 8.05 GiB is allocated by PyTorch, and 2.07 GiB is reserved by PyTorch but unallocated. If reserved but unallocated memory is large try setting PYTORCH_CUDA_ALLOC_CONF=expandable_segments:True to avoid fragmentation. See documentation for Memory Management (https://pytorch.org/docs/stable/notes/cuda.html#environment-variables)

steps: 0%|

attn = attention(q, k, v, pe=pe, attn_mask=attn_mask)

File "E:\StabilityMatrix-RAID\Kohya_Flux\kohya_ss\sd-scripts\library\flux_models.py", line 449, in attention

x = torch.nn.functional.scaled_dot_product_attention(q, k, v, attn_mask=attn_mask)

torch.OutOfMemoryError: CUDA out of memory. Tried to allocate 1.90 GiB. GPU 0 has a total capacity of 8.00 GiB of which 0 bytes is free. Of the allocated memory 8.05 GiB is allocated by PyTorch, and 2.07 GiB is reserved by PyTorch but unallocated. If reserved but unallocated memory is large try setting PYTORCH_CUDA_ALLOC_CONF=expandable_segments:True to avoid fragmentation. See documentation for Memory Management (https://pytorch.org/docs/stable/notes/cuda.html#environment-variables)

steps: 0%|